At the crossroads of software engineering and artificial intelligence, teams must align goals and methods to move from prototype to production. Clear priorities and pragmatic steps help bridge the gap between model research and product delivery.

Real projects reward careful scoping, steady iteration, and attention to both code and data quality. A good plan helps the team avoid common traps and keeps the work grounded in real user needs.

1. Define The Problem And Data Plan

Start with a crisp problem statement that names who benefits, what will change, and which constraints matter for the project timeline. Map out the types of data that are needed, where that data will come from, and how data will be labeled and versioned to support reproducible results.

A few concrete use cases and acceptance criteria help keep model research focused on outcomes that matter in the product. Teams that outline success in measurable terms can move faster and waste less time chasing vague promises.

Treat data governance as part of the engineering scope and not as an afterthought that shows up at the eleventh hour. Agree on privacy requirements, retention windows, and data access patterns early so pipelines can be built to meet operational needs from day one.

Good tagging, schema evolution rules, and lightweight audits pay dividends when scaling beyond a few experiments. When data handling is baked into everyday workflows, surprises become less likely and teams can iterate with confidence.

2. Embed AI Into Development Workflow And Testing

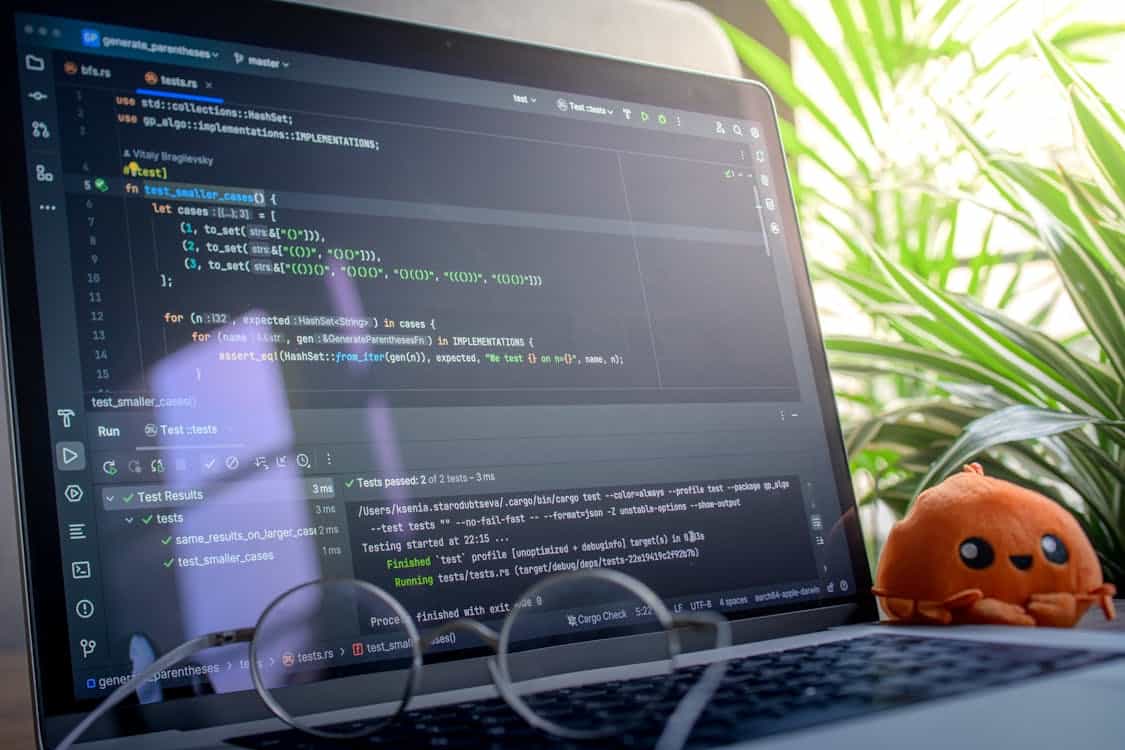

Integrate model training and validation into the same pipelines that run code builds to promote parity between research and production environments.

Continuous training and test suites that include both unit level checks and dataset level invariants can catch regressions before they reach users. Treat model artifacts like software artifacts by assigning version tags, reproducible environments, and clear deployment criteria.

Blitzy can seamlessly integrate with your CI/CD pipeline, automating model versioning and deployment while ensuring smooth transitions from research to production. This approach reduces the friction that otherwise appears when a model behaves well in the lab but poorly in the field.

Create automated checks for data drift, feature distribution changes, and downstream performance metrics to close the feedback loop between telemetry and retraining. Safety nets such as shadow deployments or canary releases help observe model behavior with real traffic while keeping risk manageable.

Keep test coverage broad enough to include edge cases that are common in the product domain so surprises are caught early. A little early test work is a stitch that often saves many late night repairs.

3. Keep Human Oversight And Focus On Usability

Design interfaces that let humans inspect, correct, and override model outputs when confidence is low or when consequences are significant. Explainability features and clear provenance traces make it easier for product owners and compliance teams to trust the system and act with speed.

User workflows should expose the right controls rather than bury them behind complex settings that invite confusion or over reliance on automation. Human judgment remains a key ingredient when models confront ambiguous or novel situations.

Plan for safe fallbacks that kick in when uncertainty spikes or when data patterns are far from the training set, so users are not left holding the bag. Logging the reasons for fallback decisions and user choices produces valuable signals for future model improvements.

Make it easy for frontline staff to provide feedback in natural ways that can be converted into labeled examples for the next training cycle. The proverb about not putting all your eggs in one basket applies here as well, since layered defenses limit surprise.

4. Monitor Models And Operational Signals Continuously

Set up dashboards that blend model centric metrics with product metrics so the team can quickly see whether model changes improve real outcomes. Key indicators often include calibration, false positive and false negative rates, and business level measures like conversion or retention tied back to model decisions.

Alerts should be meaningful and actionable, avoiding noise that trains teams to ignore warnings over time. A pragmatic alerting policy keeps the crew responsive without burning out the on call rota.

Keep an archive of historical model behavior and data snapshots to support root cause analysis when things go off script. Correlating a performance dip with a data schema change or a new release can shorten time to fix and reduce finger pointing.

Make it routine to retrace steps from anomaly to code or data change so lessons feed into the next planning cycle. When monitoring is systematic, fixes are faster and the system gets steadily more robust.

5. Build Modular Components And Reuse Models Safely

Split concerns so that model logic, feature engineering, and orchestration live in well defined modules that can be tested and released independently. Reusable components speed development and reduce duplicated effort when teams share reliable primitives across projects.

Maintain clear interfaces and stable contracts between modules so swapping an algorithm or retrained model does not create cascading failures. A modular approach makes it easier to experiment without rewriting the plumbing each time.

When reusing a pretrained model or a shared service, document assumptions about input data, expected distributions, and failure modes so downstream teams are not caught off guard. Keep fine tuning and adaptation steps explicit so drift does not silently undermine performance for a particular market or user segment.

Encourage small experiments that validate reuse in context before a wide rollout. Good reuse is about smart borrowing and careful testing rather than blind faith.

Bitcoin

Bitcoin  Ethereum

Ethereum  XRP

XRP  Dogecoin

Dogecoin  Litecoin

Litecoin